5.1 KiB

SRT Translator — NLLB / SeamlessM4T / Ollama

Translate .srt subtitle files through three backends with a minimal GUI and a practical CLI.

- NLLB (Transformers): lowest resource footprint, medium quality.

- SeamlessM4T (Transformers): medium resource footprint, higher quality.

- Ollama (local LLMs): experimental mode; possibly highest resource footprint, potentially best quality depending on the model (recommended: phi4, if available). You must have Ollama installed and running.

Advanced users can customize the LLM behavior by editing the prompt (see the

_ollama_system_prompt()function).

Requirements

- Python ≥ 3.9

- Core packages

pip install transformers huggingface_hub langdetect tqdm

- **PyTorch** (for NLLB/SeamlessM4T): install CPU/CUDA/MPS build per your platform.

- **Ollama** (only for the Ollama engine): install Ollama and ensure the server is running (`ollama serve` / desktop app); pull a model you want to use (e.g., `ollama pull phi4` when available).

> The first run of NLLB/SeamlessM4T will download several GB of model weights (NLLB-200: around 2.5GB, SeamlessM4T-v2-large: around 10GB).

---

## Quickstart

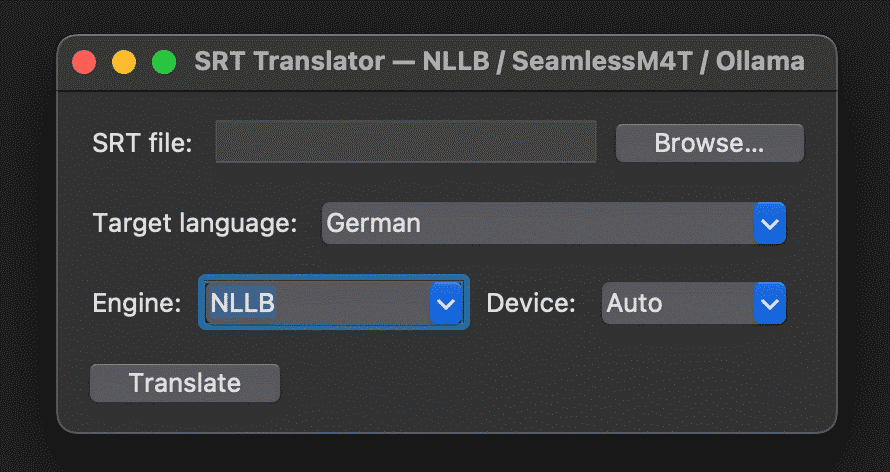

### GUI

```bash

python srt_translator.py

-

Select the

.srtfile. -

Choose target language (name like

Germanor code likedeu_Latn). -

Select engine (NLLB / SeamlessM4T / Ollama).

-

Click Translate.

CLI

NLLB

python srt_translator.py input.srt German --engine nllb

SeamlessM4T

python srt_translator.py input.srt German --engine seamless --device auto

Ollama

# Make sure ollama is running and a model is available (recommended: phi4)

python srt_translator.py input.srt German --engine ollama \

--ollama-model phi4 --ollama-host localhost --ollama-port 11434

Output defaults to input.<target_code>.srt (e.g., input.deu_latn.srt).

Advanced (CLI)

Language handling

-

target_language: name (German) or NLLB code (deu_Latn). -

--src: optional source language (name or code). If omitted, the tool auto-detects from the first lines.

Engine & device

-

--engine {nllb|seamless|ollama} -

--device {auto|cpu|gpu}(for NLLB/SeamlessM4T)

Parallelism & batching

-

--workers <int>: number of parallel workers when choosing CPU-device. -

--batch <int>: batch size per worker.

Context granularity

-

--context {line|cue|smart}-

line — translates each line independently (fastest, but least context).

-

cue — translates per cue (the whole block of text shown between one timecode pair; may contain 1–2 lines). This keeps the lines in a cue together, which usually reads better than per-line.

-

smart — sentence-aware: the tool temporarily groups adjacent cues until it detects a likely sentence boundary (based on punctuation and simple heuristics), translates that group as one sentence, then re-splits the result back to the original cues/lines. This helps avoid mid-sentence breaks and improves phrasing where a sentence spills across multiple cues. Basically, it prefer breaking at natural linguistic boundaries when possible. This generates the best results but might introduce artifacts. This mode is always used with the GUI and is highly recommended.

-

-

--max-span-cues <int>

Sets an upper bound on how many consecutive cuessmartis allowed to bundle into one sentence group (e.g.,--max-span-cues 4). If a sentence boundary isn’t found before the cap, the group is cut at the cap to keep batches small and responsive; the output is still mapped back to the same number of cues/lines you started with. A “cue” here means the numbered SRT block with timestamps plus 1–2 text lines. I yet to encounter a sentence being longer than 4 lines of subtitles.

I/O

-

--out <path>: custom output path. -

--no-progress: disable CLI progress bar.

Ollama

-

--ollama-model <tag>: e.g.,phi4(recommended if available). -

--ollama-host <host>/--ollama-port <port>

Prompt customization (LLM)

- Edit

_ollama_system_prompt()insrt_translator.pyto adjust style/constraints.

Notes & Tips

-

Choosing an engine: Start with SeamlessM4T for a strong baseline. Use NLLB on smaller machines. Switch to Ollama when you can spare resources and want the best possible output from a strong local LLM (try phi4 if available).

-

Target codes: Using NLLB codes (e.g.,

deu_Latn,eng_Latn) is precise and avoids ambiguity.

Troubleshooting

-

Transformers models fail to load: Verify your PyTorch install matches your hardware (CPU/CUDA/MPS).

-

Ollama engine errors: Confirm

ollama serveor the desktop app is running and the model tag exists locally (ollama list/ollama pull <model>). -

Large downloads: The first run will download large AI models (NLLB around 2.5GB, SeamlessM4T around 10GB, Ollama model depending on your choice).