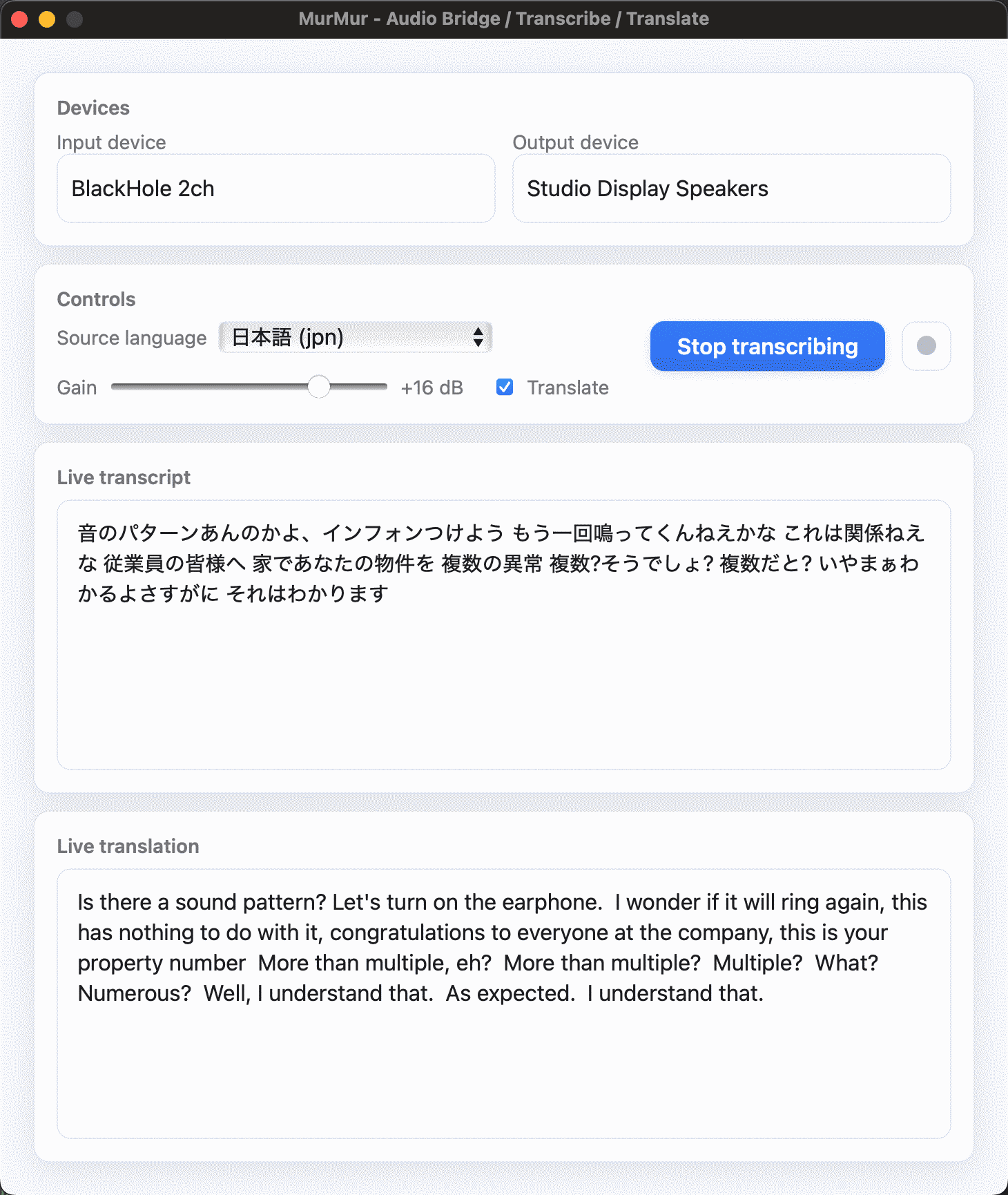

MurMur — Audio Bridge / Transcribe / Translate

MurMur is a lightweight desktop app that routes, transcribes, translates, and records system audio in real time — all locally.

- ASR (Automatic Speech Recognition) engine:

faster-whisperdriven by a local Whisper model for fast, memory-efficient transcription. - Live client: “nearly-live” Whisper pipeline for continuous updates.

- Translation: uses Whisper’s built-in

translate=Truepath (to English) - Audio bridge: pick input/output devices, loop back desktop audio, add virtual gain, and capture to MP3 (via

ffmpeg). - Bonus use-case: route desktop audio into Shazam for Mac for highly reliable song ID (no mic/room noise).

Note: Earlier builds experimented with NLLB and SeamlessM4T for wider language translation; current MurMur does not use these. Whisper does transcribe and translate nearly any language, but can only translate to English. In case you want to use other transcription or translation software (non-realtime), the recording-feature was implemented.

Features

- Two-pane UI: Live transcript + optional live English translation.

- Source language: Auto-detect (Whisper) or manually set.

- Audio routing: Low-latency loopback with adjustable virtual gain.

- Recording: One-click capture to MP3.

Requirements

- Python 3.9+

- ffmpeg on PATH (for MP3 export) — installable via Homebrew.

- Virtual audio sink (macOS) — install one of:

- BlackHole (open-source, zero-latency loopback).

- Background Music (adds a virtual device + per-app volume).

- Soundflower (classic virtual device).

- Loopback (commercial, flexible routing UI).

These create a virtual output/input so one app’s audio can be captured by another (e.g., system output → MurMur input).

Install

Create a venv and install dependencies:

python -m venv .venv && source .venv/bin/activate

python -m pip install --upgrade pip

pip install pywebview sounddevice huggingface_hub torch whisper-live numpy

- Pywebview: lightweight native window hosting HTML/JS. (GitHub

- python-sounddevice: PortAudio bindings for low-latency I/O.

Quick Start

- Install and select a virtual device (e.g., set system output to BlackHole 2ch). existential.audio

- Run the app:

python audiobridge-gui.py - In Devices:

- Input → the virtual device (captures desktop audio), or your mic.

- Output → your speakers/headphones (or another virtual sink or no output at all).

- Click Transcribe → live captions appear; toggle Translate to get English translation output via Whisper.

- Use the Gain slider for loopback level.

- Tap the square Record button to capture a timestamped MP3 (requires

ffmpeg).

Tip — Shazam workflow: set system output = BlackHole, MurMur input = BlackHole, then run Shazam for Mac to ID what’s playing on your desktop with no ambient noise.

Engines & Model Notes

- Transcription:

faster-whisper— Whisper reimplementation using CTranslate2 for speed and lower memory usage. You can swap model sizes (e.g., small/base/large-v3) as needed. GitHub Hugging Face - Live pipeline: WhisperLive-style continuous client for “near-real-time” updates. GitHub

Packaging (optional)

- macOS bundles can be built with PyInstaller (not included here).

- Include the provided 1024×1024 icon and ensure

ffmpegis present on target systems (or ship a static build).

Troubleshooting

- No audio into MurMur: ensure the virtual device is selected as both system output and MurMur input. For some drivers, restarting CoreAudio can help after install.

sounddeviceerrors: ensure PortAudio is available/installed correctly.- Recording fails: confirm

ffmpegis installed and on PATH.

Privacy

All processing runs locally. Audio never leaves your machine unless you route it to cloud software yourself.

Acknowledgements

- faster-whisper by SYSTRAN.

- WhisperLive (real-time pipeline inspiration).

- Pywebview and python-sounddevice communities.

- BlackHole, Background Music, Soundflower, Loopback for virtual audio devices.